Login via Remote Desktop

All login-nodes on CLAIX have a running X-server, which allows you to start a remote desktop session.

Using a remote desktop is convenient for running small programs with graphical interfaces or for viewing and editing files. However, many actions on the HPC system still require you to start a terminal and use command-line interface programs, such as submitting jobs to the batch system.

The nodes login23-x-1 and login23-x-2 are specifically provided for remote desktop sessions.

Prerequisites: Please ensure that you have completed all the prerequisite steps to access CLAIX.

Web Access

The following login-nodes provide web servers. This allows you to create remote desktop sessions and access CLAIX from your browser:

After opening the website, enter your HPC credentials and your second factor authentication.

For more detailed information, please refer to the official Starnet documentation.

Desktop Client

Using a locally installed desktop client offers better performance compared to web access.

The FastX Desktop Client can be downloaded from Starnet.

Info: To reduce the number of times you need to authenticate for logins, you can set up key-pairs and ssh-agents.

You can start a remote desktop session as described below. Further information is provided on the official Starnet documentation.

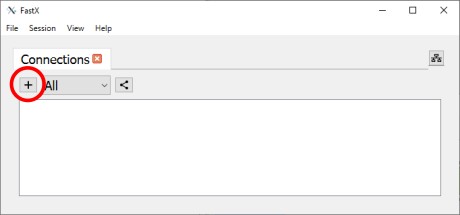

| 1. Press the plus (indicated by a red circle) |  |

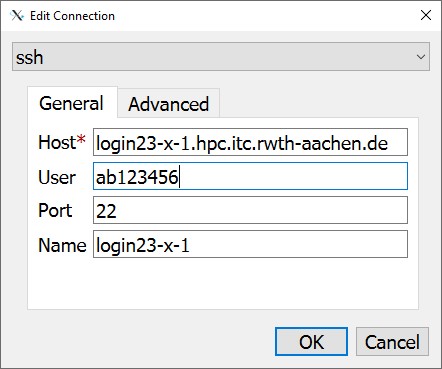

2a. Select "SSH" as connection method 2b. Enter the desired login-node, your username, and a name for the connection. Set "Port" to 22. 2c. Press "OK". |  |

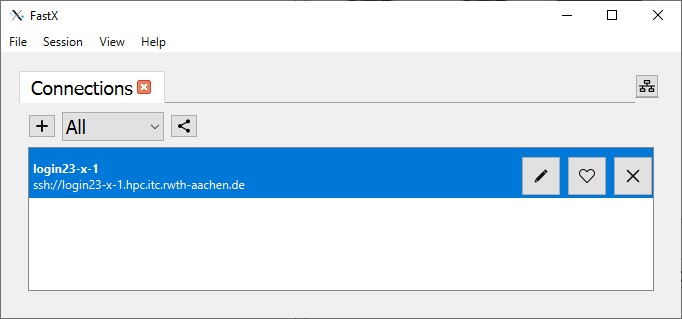

3a. Double-click on the connection that you just configured. 3b. Enter your HPC account credentials. |  |

| 4. Click on the Plus-Symbol to create a new remote desktop session. |  |

5a. Select one of the desktop environments. 5b. Click "OK" |  |